Introduction

Forget about technology for a moment and think about our everyday lives. Disruptive events rarely happen without precursors. A shift in someone’s behaviour. A sudden emotional spiral. A health symptom was dismissed as nothing. A pattern that, in hindsight, was clearly there all along. We don’t always miss the signals; more often, we notice them and dismiss them as one-time exceptions… until it’s too late.

And just like that, in cybersecurity, we often forget that our archnemesis is not technology, but humans – adaptive, deliberate, and patient. And humans, mostly, have detectable, predictable patterns. So why are we still missing the signals?

Behavioural analytics is not (just) anomaly detection

A user usually logs in from London. Today, the login is from somewhere in Europe. Flag the deviation. Done — right? Wrong. That’s anomaly detection. A single data point. A blip. And not the full remit of behavioural analytics.

Behavioral analytics is not about detecting individual anomalies. It is about identifying subtle shifts, hidden correlations, and evolving activity chains that, when connected, reveal adversarial intent long before impact occurs.

Advanced behavioral analysis doesn’t just model activities; it models something far more personal and high-impact: Intent. How? Attackers, even when camouflaged, leave behavioral fingerprints. With the help of advanced behavioral analytics, it helps us understand:

- What patterns repeat across users?

- What event sequences frequently precede fraud?

- What micro-signals cluster together before an attack?

- What activity chains correlate with successful cash-out?

Threats are now adaptive: Make the move beyond static detection

Signature-based detection, or known malware hashes, blacklisted indicators, helps identify known threats, but it analyzes threats in hindsight (such as malware signatures or IP addresses associated with known attackers) rather than stopping or preempting them. By the time signatures are created, attacks have usually already occurred, making this approach inadequate against zero-days and novel, polymorphic malware variants.

Behavioral analytics with AI/ML flips this paradigm: it’s forward-looking and pattern-based.

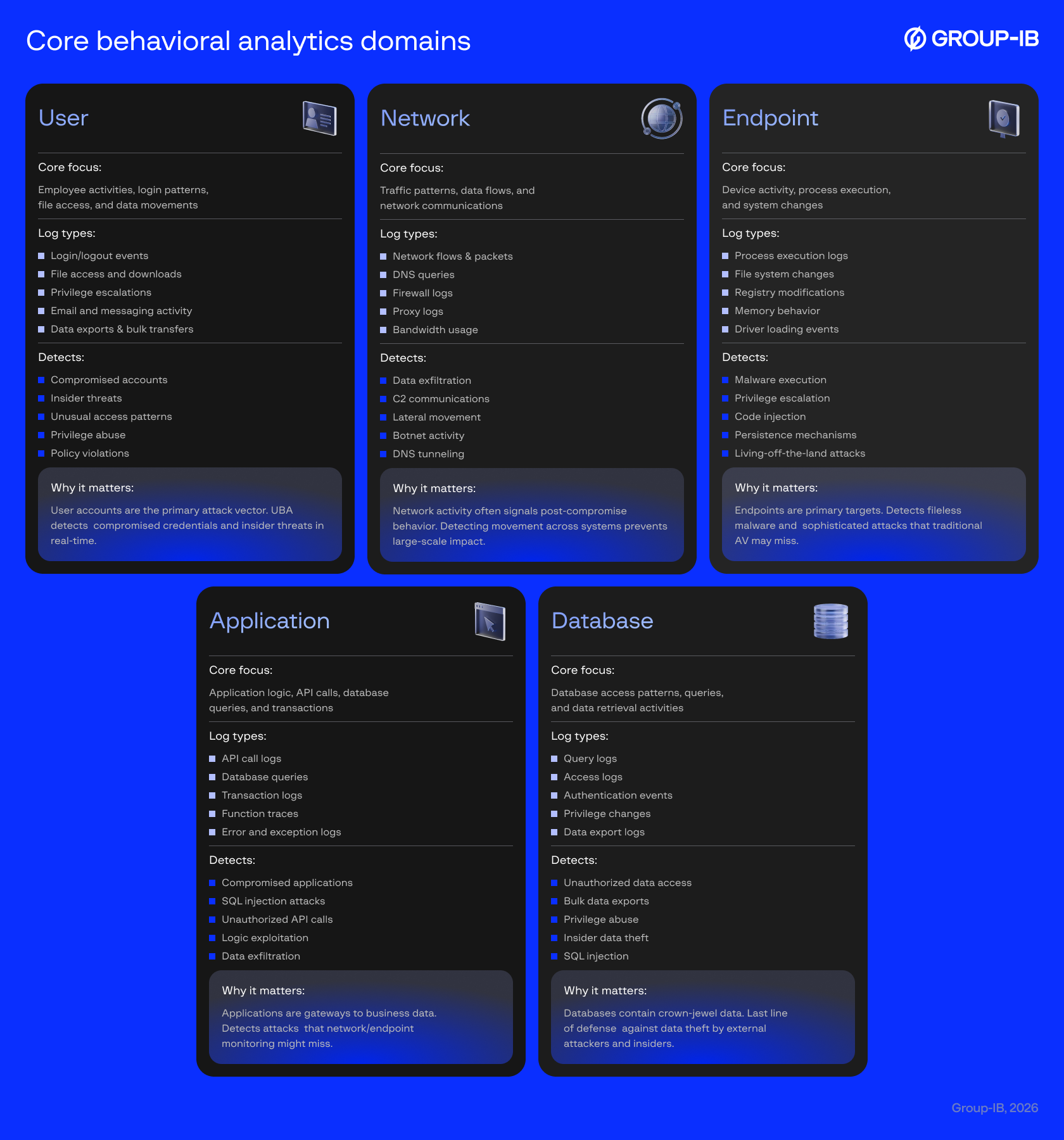

Behavioral analytics, a subfield of data analytics, collects and analyzes event data from digital assets, infrastructure systems, and processes to answer critical defense questions: What is the adversary’s motive? What is their modus operandi? What behavioral patterns emerge? When do transactions occur? How do devices interact? What attack typologies are present? Who are the victim personas?

This deeper understanding enables organizations to better predict and stop malicious activities. At its foundation, behavioral analytics relies on hard data and the ability to build logs into a cohesive narrative.

Unlike signature-based detection, which is reactive and requires human analysts to correlate and investigate incidents, behavioral analytics with real-time ML can flag anomalies as they occur (when a user accesses previously untouched documents, when network traffic deviates from baselines, when process behavior appears suspicious). This compresses the detection-to-response timeline substantially.

When combined with SOAR (Security Orchestration, Automation, and Response) operations, behavioral analytics becomes truly real-time and adaptive. SOAR can instantly respond to deviations by quarantining accounts, blocking IPs, and terminating sessions — all without human intervention.

How to detect cybercriminals in your ecosystem when they disguise their intent as legitimate activity?

Behavioral cues are a substantial leverage for defenders and adversaries. Defenders use behavioral anomalies to detect threats; adversaries use behavioral mimicry to minimize detection and blend malicious actions into normal workflows.

For example, traditionally, defenders caught phishing attacks by identifying static indicators: grammatical errors, imperfect language nuances, and unrefined social engineering. But AI-driven attackers have eliminated these artifacts, creating near-perfect camouflage. They now optimize campaigns based on engagement patterns, dynamically localize content, adapt phishing flows to match the services being impersonated, and replay authentic session behaviors, all with behavioral manipulation that renders user-level anomaly detection ineffective.

As adversaries move toward context-aware behavioral optimization, defenders must evolve their approach correspondingly.

Rather than chasing single events/user anomalies, there is a need to fuse context-aware signals across multiple domains — correlating infrastructure patterns, identity behaviour, and transaction data together. Because the real limiting factor for attackers isn’t behavioural manipulation (they’re getting good at it), it is resources, targeting precision, and complexity that comes with operating at scale.

Where behavioural analytics is genuinely strong

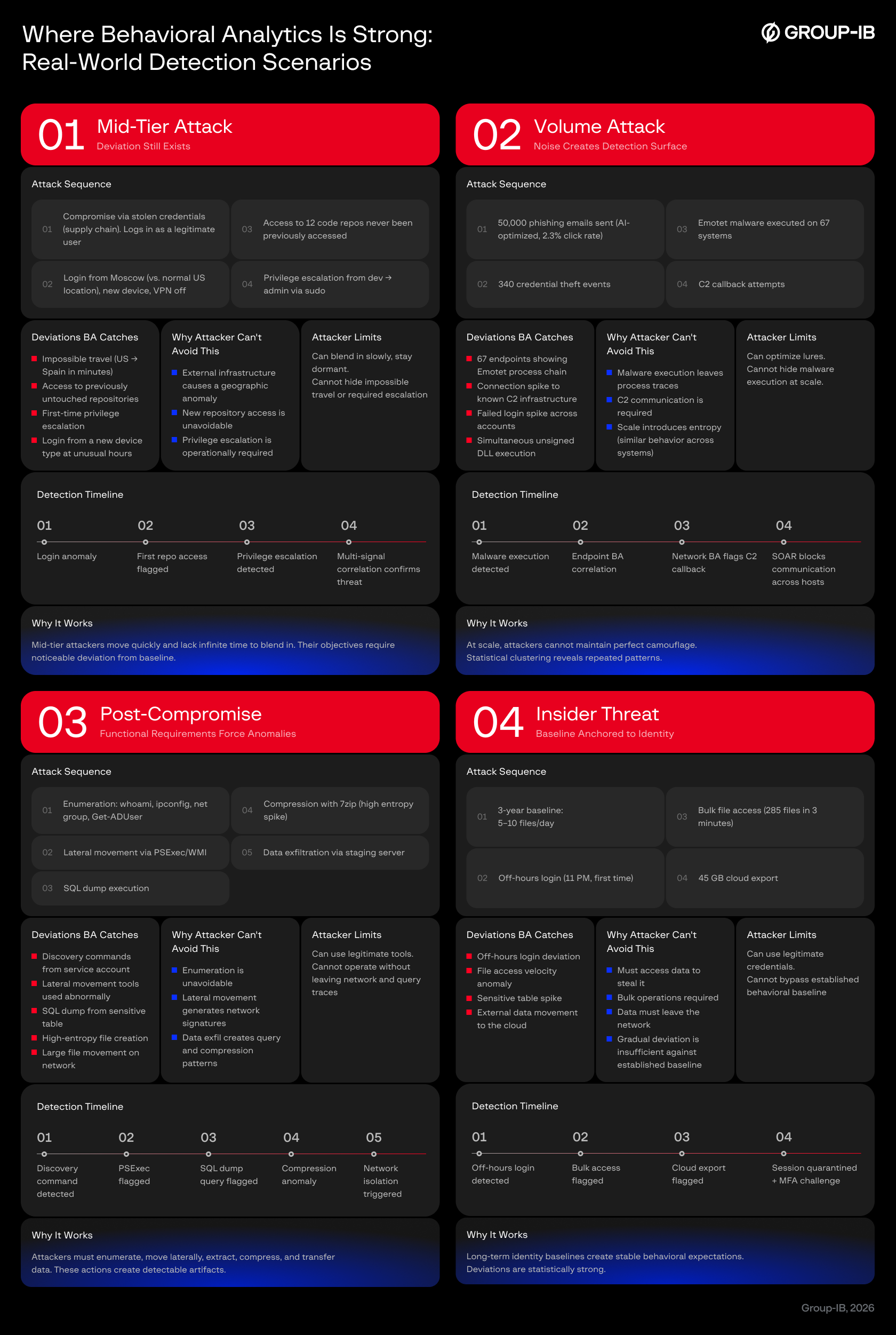

Behavioural analytics also has a strong use case in insider threat detection – one of the costliest and most complex breach scenarios organisations face. But deployed alone, it’s incomplete. Its real strength emerges when correlated with broader risk signals. Where behavioural analytics proves strongest is in:

- Mid-tier attacks, where deviation persists

- Volume attacks, where scale introduces detectable noise

- Post-compromise stages, where lateral movement creates anomalies

- Insider threats, where deviation from established baselines exposes abuse

These four scenarios represent the true strength. As stated, behavioural analytics cannot alone detect everything, but it proves very effective when an attacker’s operational requirements force them into detectable behaviors. You can’t compromise an account without accessing it from somewhere. You can’t escalate privileges without creating an escalation anomaly. You can’t steal data without transferring it. You can’t enumerate systems without running discovery commands.

Real-World Detection: These scenarios show actual attack topologies where behavioral analytics detects and blocks attempts in real-time.

Where behavioural analytics does not excel and why that matters?

While many attacks may follow a similar kill chain discussed previously, today’s threats are evolving and less straightforward. The cases we discussed may manifest in real-life but do not take into account various scenarios that teams witness more often than not. This realistic messiness includes false positives, alert fatigue, partial detection by behavioural detection, or even “smarter attack” scenarios.

Attackers are getting smart: They try to work their way around behaviour-based detection systems by disguising their attacks as legitimate actions (no bulk actions, use of legitimate tools, consistent geography via VPN masking, access during normal working hours, privilege escalation through valid insiders, data exfiltration mixed with normal work done through internal file servers, and not external endpoints). Here, detection with just behavioural analytics becomes nearly impossible.

Smart attack: Deliberately spreading activity to avoid behavioral tracking

Week 1: Day 1 — Compromise via Supply Chain

Attacker gains access to the dev account via a compromised dependency. Creates a persistent backdoor.

Week 1: Day 3–5 — Reconnaissance (Spread Over 3 Days)

Attacker runs git log and git clone during normal business hours. Only 1–2 queries per hour. Looks like legitimate developer work.

BA: No alert (normal developer behavior)

Week 2: Day 1–3 — Privilege Escalation (Deliberate Slowness)

Attacker requests elevated access through the official ticket system. Waits 2 days. Requests to different admins. Waits 1 more day. Gets escalation without looking suspicious.

BA: No alert (normal request pattern, spread over time)

Week 2–3: Daily — Data Exfiltration (Low-and-Slow)

Attacker exports 10–20 files per day, mixed with legitimate work. Spreads over 2 weeks. Uses internal file server (not external). Average 5 MB/day (well below baseline for developer).

BA: No alert (individual queries look normal, spread over time)

Week 3: Day 2 — Lateral Movement Prep (Using Legit Tools)

Uses psexec and wmic (legitimate Windows tools) to access other systems. Living-off-the-land. No malware. Standard process chains.

BA: No alert (no process anomaly, common tools)

Week 3: Day 5 — Data Exfil via Internal Network

Moves 400 GB to internal file server over 3 days (not external). Then copies to USB during lunch break — no network alert, no BA visibility.

BA: No alert (internal transfer, normal timing, no external signature)

Week 4 — Breach Discovered

Discovered 3 weeks later by an external partner noticing competitive intelligence data. By then attacker has scrubbed logs and is gone.

UNDETECTED FOR 3 WEEKS

Why behavioural analytics is an incomplete detection capability here:

- Attacker deliberately optimized to avoid anomaly triggers

- Low-and-slow activity (5 MB/day, 1–2 queries/hour) is literally invisible to anomaly detection

- Living-off-the-land tools are legitimate, no process anomalies

- Internal data movement (file server → USB) outside behavioural detection scope

- Behavioural Analytics assumes attackers will behave badly. Smart attackers don’t. They behave normally.

Nation-state and well-resourced APTs. If patient, well-resourced threat actors aren’t constrained by behavioral manipulations, behavioral analytics won’t catch them at initial breach. And in post-compromise activity, if they have good operational security, living-off-the-land tools, normal business hours, low-and-slow data movement, they can go undetected for months. Behavioural analytics catches anomalies, and if adversaries don’t make any, there are no signals to catch.

Operational reality: alert fatigue and false positives. BA generates massive amounts of data. Without proper context or correlation, you get alert fatigue and false positives. This leads to mixed signals, missed signals, and additional cognitive load on analysts to sieve and trace individual signals to understand if they’re risky.

Behavioural analytics is an essential but incomplete defense layer. Its effectiveness varies by threat profile. Most effective against insider threats and volume-based attacks. Less effective against well-resourced, patient adversaries. Therefore, it must be part of a layered defense, not a standalone solution.

The necessary evolution: Cross-domain behaviour modeling

A behavioural signal in one department can’t tell much. A vishing call is a customer service issue. Malware detected is an endpoint alert. A financial transfer is a transaction event. In isolation, each is a data point. When fused (user on an active call, malware with remote overlay capability present, financial transfer initiated), they form an attack sequence. The context eliminates the uncertainty.

Modern fraud campaigns aren’t sequence-driven. Especially AI-enabled fraud, which is multi-stage, runtime, and orchestrated. Therefore, the behavioural signals need to be deeper, enriched, and most importantly, fused. Detection needs to move from “presence of malicious code” to the runtime-intent of actions to establish high-confidence alerts.

Cross-domain behaviour modeling operates by correlating signals across four layers simultaneously:

- Device-level signals: sideloaded installation sources, unauthorized screen capture, remote control behaviours, integrity anomalies

- Identity and session signals: real-time behavioural patterns, session flow deviations, cross-account device overlaps

- Transaction signals: velocity, beneficiary clustering, sequence compression, payee addition patterns

- External threat intelligence: active campaign profiles, payment identifiers linked to criminal operations, infrastructure reuse patterns

This shifts detection from event-based alerts to complete attack lifecycle mapping: fake app distribution → installation anomaly → malware activation → vishing call → screen overlay → transaction initiation → cash-out attempt.

Each stage informs the next risk calculation. The result is not an alert. It is a scored fraud sequence: a continuously recalculated probability that models not just what is happening, but what comes next. The model doesn’t detect fraud. It predicts the next step in the attack chain.

This is the meaningful distance between reactive detection and predictive defense. As a result, adequate and immediate action can be taken – accounts can be auto-secured before cash-out occurs, transactions can be rejected at the moment of highest fraud probability rather than investigated afterward.

What does this look like in practice?

Group-IB Fraud Protection puts this approach into practice, correlating hundreds of risk signals across web, app, device, human behaviour, transaction, network, and external threat intelligence in real time. In deployments where this level of fused, predictive detection is active, fraud on malware-compromised devices has been reduced to a record low of 0.027%.

That number matters not as a vanity figure, but as a calibration point. It represents what becomes possible when behavioral signals are cross-referenced, and function as an integrated intelligence layer – one where validated real-time anomalies and network-level patterns continuously flow back into fraud scoring models, KYC risk thresholds, and cyber detection logic.

Without that feedback loop, institutions continue to respond to yesterday’s signals. The adversary moves forward. The defense stands still.

Is the shift worth making?

Although operational requirements force attackers into detectable behaviours, the most sophisticated adversaries are already designing around behavioural detection, and we cannot catch everything they do, not when individual sessions and device fingerprints sit in isolation. But the picture shifts entirely when these are fused with external intelligence into a single intelligence layer. Isolated signals and activities come together into one coordinated view, revealing attack infrastructures and campaign patterns that were previously hidden.

When intelligence converges into defence action, we see patterns across domains before losses occur, enabling teams to counter:

Account Takeover

|

Business Email

|

Account Push

|

Mule NetworksThreat signals surfaced:

|

Account and Card

|

Digital Risk and

|

Behavioural analytics is not the answer on its own. But it is an essential part of the answer. The signals are there – in devices, sessions, transactions, and the external threat landscape. The gap is not in the data but the ability to connect it: to move from isolated anomalies to scored fraud sequences, from reactive alerts to predicted next steps.

In practice, this approach has reduced fraud on malware-compromised devices to a record low of 0.027% — a benchmark that illustrates what becomes possible when behavioural signals operate as part of an integrated intelligence layer.

The adversary has already moved on. The question is whether your defence has too? Reach out to our experts to integrate a cyber-fused behavioural defense strategy that uncovers risk not just in actions, but in everyday activity — before disruption can take root and scale.